Progressing AI along with whatever is actually offered

After Mandarin business shed accessibility towards Nvidia’s leading-edge A100 as well as H100 compute GPUs, which could be utilized towards educate different AI designs, they needed to discover methods towards educate all of them without utilizing one of the absolute most progressed equipment. Towards make up for the absence of effective GPUs, Mandarin AI design designers are actually rather streamlining their courses towards decrease demands, as well as utilizing all of the compute equipment they can easily enter mix, the Wall surface Road Diary records.

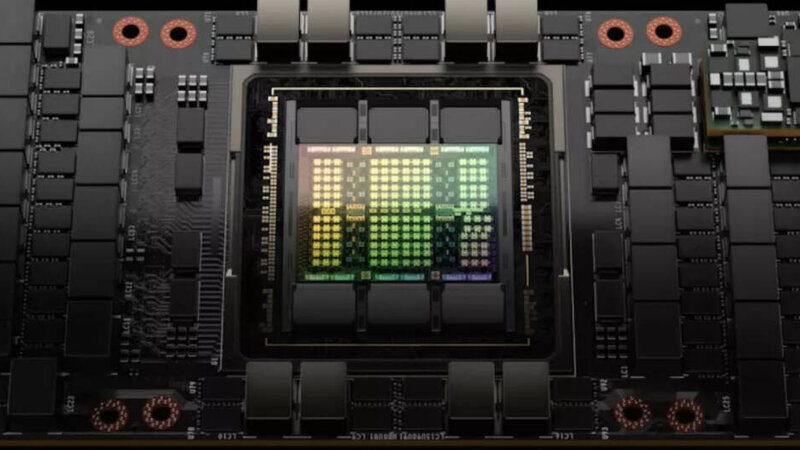

Nvidia has actually A800 as well as H800 cpus

Nvidia cannot offer its own A100 as well as H100 compute GPUs towards Mandarin bodies such as Alibaba or even Baidu without obtaining an export permit coming from the U.S. Division of Business (as well as any type of request will probably be actually rejected). Therefore Nvidia has actually industrialized A800 as well as H800 cpus that deal decreased efficiency as well as include burdened NVLink abilities, which frontiers the capcapacity towards develop high-performance multi-GPU bodies typically needed towards educate massive AI designs.

For instance, the massive foreign language design responsible for OpenAI’s ChatGPT needs coming from 5,000 towards 10,000 of Nvidia’s A100 GPUs towards educate, inning accordance with approximates through UBS experts, records the WSJ. Because Mandarin designers don’t have actually accessibility towards A100s, they utilize much less qualified A800 as well as H800 in mix towards accomplish one thing akin towards the efficiency of Nvidia’s higher-performance GPUs, inning accordance with Yang You, a teacher at the Nationwide College of Singapore as well as creator of HPC-AI Technology. In April, Tencent presented a brand-new calculating collection utilizing Nvidia’s H800s for massive AI design educating. This method could be costly, as Mandarin companies may require 3 opportunities much a lot extra H800s as their U.S. equivalents will need H100s for comparable outcomes.

Because of higher sets you back as well as the failure towards literally obtain all of the GPUs they require, Mandarin business have actually developed techniques towards educate massive AI designs throughout various potato chip kinds, one thing that U.S.-based business seldom perform because of technological dependability issues as well as difficulties. For instance, business such as Alibaba, Baidu, as well as Huawei have actually checked out utilizing mixes of Nvidia’s A100s, V100s, as well as P100s, as well as Huawei’s Ascends, inning accordance with research study documents evaluated through WSJ.

Although certainly there certainly many business in China establishing cpus for AI works, their equipment isn’t sustained through durable software application systems such as Nvidia’s CUDA, which is actually why devices based upon such potato chips are actually apparently ‘prone towards squashing.’

Additionally, Mandarin companies have actually likewise been actually much a lot extra assertive in integrating different software application methods towards decrease the computational demands of educating massive AI designs, a method that has actually however towards increase grip worldwide. In spite of the difficulties as well as continuous improvements, Mandarin scientists have actually viewed some excellence in these techniques.

Latest-generation design

In a current report, Huawei scientists shown educating their latest-generation big foreign language design, PanGu-Σ, utilizing just Rise cpus as well as without Nvidia compute GPUs. While certainly there certainly were actually some drawbacks, the design accomplished cutting edge efficiency in a couple of Chinese-language jobs, like analysis grammar examinations as well as comprehension.

Experts caution that Mandarin scientists will certainly deal with enhanced problems without accessibility towards Nvidia’s brand-brand new H100 potato chip, that includes an extra performance-enhancing include especially helpful for educating ChatGPT-like designs. On the other hand, a report released in 2015 through Baidu as well as Peng Cheng Lab shown that scientists were actually educating big foreign language designs utilizing a technique that might make the extra include unimportant.

“If it jobs effectively, they can easily efficiently prevent the permissions,” Dylan Patel, principal expert at SemiAnalysis, is actually stated towards have actually stated.